Google can make you. And break you. This Internet Art application from late 2004 which I entitled Photo Noise, was written in PHP and was built upon the Google SOAP Search API service which they nuked last September. The browser portion of Photo Noise still works so you can crawl a static collection of photos, but the server-side module which is supposed to run every 5 minutes and locate fresh ones needs to be redone. I could replace it with the new Google AJAX Search API service, or switch to Yahoo API, Bing, etc. Anyone want to help? Not brain surgery to redo it, just haven't gotten a round tuit and still not sold on which search service to go with. I'd rather not rebuild this thing every few years but perhaps impossible to avoid.

My application works like this... [programming jargon alert but will simplify the best I can] There are 2 layers - the server and the client. The server piece runs every 5 minutes, doing the Google-API web search (they never supported image search). The search terms contain default photograph filenames which I randomly generate...

Sony - DSC00253.JPG

Canon - IMG_0110.JPG

Casio - CIMG0039.JPG

Konica - PICT0340.JPG

Fuji - DSCF0632.JPG

Nikon - DSCN0100.JPG

...and so forth. The searches target various result depth levels for variation purposes (page 3, page 10, etc). An attempt is made to pull one image out of the page, sometimes even following a thumbnail link. Its candidacy is verified, and then the image URL address (not the actual image) is added onto the end of a long file containing about 5000 such URLs. This queue file is managed FIFO style (first in, first out) so its always in flux and never grows too large. There is also some file-locking code here and other 'just in case' stuff.

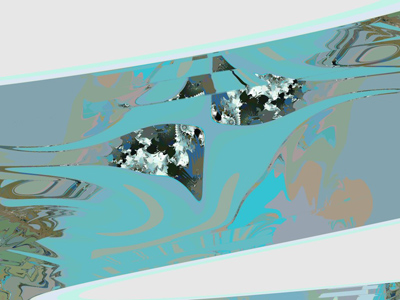

The client piece runs when the Photo Noise page loads in the browser. It reads the image URL file, at a position determined by a browser cookie which stores the last image shown. The loaded image url is not <img> linked in the browser, because many of the found photos have hot-link protection, so I wrote a PHP hack that streams in the image bytes and flushes that to the page. Once the image is finally shown, you can't see it again (unless you manipulate the cookie). You can't go back or refresh, doing so just grabs the next one in the queue. Nor is meta data provided about the photo. The photo is merely shown framed on a blank page/wall with a small menu at top-left. A 20-second 'auto' refresh mode was provided there so you didn't need to do it manually.

Some of these design decisions were meant to minimize the dark side of appropriation. People's photographs were indeed being pilfered and shown without credit, not to mention bandwidth being stolen from the owners. However each visitor could only see a photo once, spreading the piracy thin. No image files were actually stored on my server, just their addresses. And if you do the math, each URL address stays in the queue for only about a week. Of course the Photo Noise page would load faster if the images were cached locally, but eventually decided that I enjoyed the various load speeds, since they relate to server location (universities, high schools, third world countries, corporations, etc.) and often thus to subject matter.

Photo Noise was probably a horrible title. The little writeup I gave it, rambling about encapsulation and passivity, was also a bit eccentric. I try to make work that can be viewed from many perspectives, and I purposely document my pieces here with only 1 or 2 possible reads. It has been standard practice for web artists to present their pieces with only title, materials, and dimensions (often just the title). I am typically more descriptive and playful, which is something I've done on the web since 1998, publishing thousands of photos with accompanying text to places like ebay and miniarcade. To me these documentation choices are significant, and often I choose with subtlety so as to invite but not hinder discovery. Anyhow one writeup the piece got at neural.it was awesomely entitled "Photo Noise, the Amateurial Digital Pictures Narrative" which probably nails it.

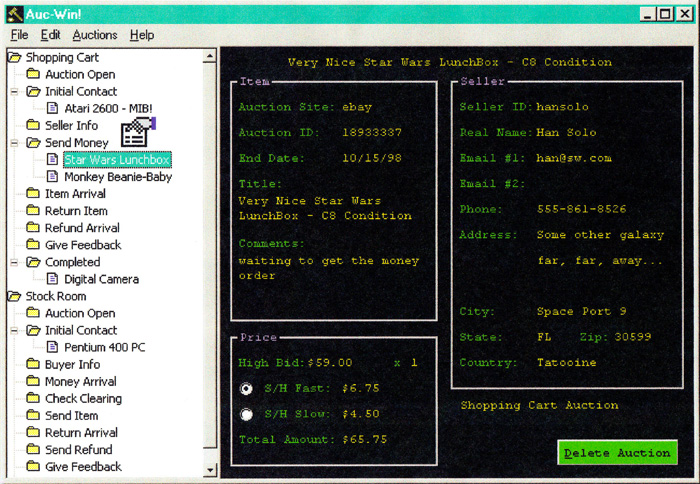

The above image was an early screen capture. I recently posted an archive of 500 screen shots. Over the years I also saved a handful of them and made a Favorites album. Photo Noise has been almost continually up and running since 2004, serving hundreds of thousands of photographs to visitors. Many kind friends have claimed to enjoy it. It was blogged about a few times. Rhizome.org and RunMe.org absorbed it into their archives. Long ago it was shown in gallery shows (here and here) and was given a first place award by Adam J. Lerner (current director of the Denver Museum of Contemporary Art). For the gallery exhibitions I wrote an additional piece of C# software to act as a Photo Noise 'agent', monitoring network outages or page loading errors, re-spawning browser instances as needed.

I have derived much enjoyment from it, as creator and voyeur both. This is one of the only artworks I made that depends upon external services at run-time in order to be viewed, probably because I'm an inventor type who loathes maintenance duty :)